The need to transfer data from one machine to another for processing has been around for a long time. Believe it or not, even before the internet was born—before API, web services, service buses, and even before FTP evolved—data transfer was being done, with the help of some fairly archaic methods. (Some are still in use today.) They sometimes required automated scripts that dialed up 300 baud modems, exchanged protocols, and started data transmission. If you don’t know what a 300 baud modem is, it’s really okay. Just search the web for kicks.

Later, in the 80s, the File Transfer Protocol (FTP) gained greater acceptance and was more widely used. More servers and clients adopted it, as did Telnet and other TCP/IP tools. FTP servers became a very powerful tool in the interface world including for AS/400 and mainframe platforms.

Now, almost 50 years after its initial specifications were drawn up, having had multiple improvements to allow access behind firewalls, binary transfers, compressions, and later with the addition of encryption (FTPS and later SFTP), FTP continues to be a widely used method for transferring files.

Then, around 2010, numerous cloud storage providers including Dropbox, One Drive, Google Drive, Box, and at least 20 others (which may or may not exist today), showered the internet world with promises of unlimited storage, security, and instant backup.

Although targeting the consumer market, mostly for the storage of photos, they soon moved up into the enterprise sector. Today they include full document storage capabilities and beyond.

Some of these cloud storage services are also being used for transferring data to partners, clients, and vendors. They are capable of storing large files, they can be accessed using APIs, and some even offer web callbacks for instant notification of file changes, creations, and deletions.

Which leads to the question: if you want to exchange data, which is better? FTP or cloud storage?

Let’s take a look.

Using FTP for Interfaces

For this discussion, we’ll use FTP within the context of SFTP or secured FTP.

Now, by itself, FTP is not a secure way of transferring data. Because you are using SFTP, to be secured, it must be configured properly, both on the server end as well as the client end. Using SFTP with only username/passwords is somewhat safer than using FTP alone, but it’s not as safe as creating SSH keys and using SSH keys along with login credentials.

But before we talk about security you first need to host an SFTP server somewhere. There are a few ways to do this depending on the requirements. You can host the server yourself or you can host it in with an infrastructure provider such as Amazon, Azure, or others.

My Kingdom for an FTP Server

If you are installing your own FTP server on Windows machines, I recommend Syncplify.me or BitVise. They both are great packages. But I have recently been impressed with BitVise, especially for its configuration, as it provides the ability to export and import settings in one go.

If you are using a virtual machine/server on a cloud provider such as Amazon or Azure, you will have to first select your operating system and then install the software. In both cases, you will need to open up the firewall to allow for the SFTP ports.

Needless to say, in both cases, the server must be exposed to the internet or at least exposed to the party that you are exchanging data to and from. This will most likely require you to put the server on the DMZ or create a tunnel by port or by VPN. The complexities that these layers will add to the interface cannot be ignored.

Phew. This is a lot of configuration just to get an SFTP server up and running. Fortunately, there are services that come fully ready for use with an SFTP server. SiteGround and A2Hosting, just to mention a couple, can be purchased as a pay-as-you-go service.

Still, although they come fully ready for use, you need to configure them with the right security and settings. But it’s much easier than having it to do it all from scratch.

Unless your company policies do not allow you to go to a provider, enlist your IT services to set up on your company’s infrastructure an SFTP server that is accessible to the data partners. Be prepared since it might take a bit of time and a lot of testing to ensure that everything is secured, compliant, and reliable.

Ready for interfacing

Okay. Now you have your (S)FTP server all ready, and you can get started exchanging data with another party.

Where do you start?

First, you need to agree on the specifications of the interface. Who is sending the files? Where will you put them? How often? Naming conventions? What data format? Etc. These specifications are critical to ensure that you don’t lose data. A common way of exchanging files with FTP is to have the sender of the data place the files in an inbound folder with a date/time stamp. Over time, the folder will have multiple files of the same type with different date/time stamps. Something like:

inbound/

sales-2019-07-30.csv

sales-2019-07-29.csv

sales-2019-07-27.csv

inprocess/

sales-2019-07-26.csv

archive/

sales-2019-07-25.csv

sales-2019-07-24.csv

sales-2019-07-23.csv

...

error/Sample FTP folder structure showing files waiting to be processed, files currently being processed and files that have been processed. An optional folder where files that were not successfully processed are placed.

The data sender would have to write a script on their end to initiate the VPN or tunnel connection (if one is required), then use an FTP client to log in to the server by providing credentials and/or SSH keys, then locate the inbound folder, and then upload (using a PUT command) the files on that folder.

At some point in the day, the data recipient needs to schedule a check to see if there are files in the inbound folder. If so, they’d download them (using the GET command) to their computer and, finally, process them. In essence, their script will be very similar to the data sender’s, except at the end, it would either archive or delete the files from the inbound folder.

As you can see, the above is very similar to how a mailbox works. Someone puts a letter in the box. Later, someone opens the box and retrieves anything that is in there.

An interface like this is simple and quite robust for the most part. However, additional logic must be added to the scripts to handle when things go don’t go as planned. For example:

- Connection fails while transferring files

- Corrupted files (usually from interrupting transmissions)

- The recipient is downloading files as the sender is still sending them

- Extraneous files placed there

- Files that fail to process need to be restored back into the inbox for re-processing

- and many other cases

Summary

FTP is a very good solution if you require a proven file transfer system. It requires some solid understanding of the FTP server’s capabilities, and to automate it, you need some coding/scripting skills. There’s also the added bonus that FTP is a widely used standard, and it has numerous clients, some of which can even help you build the automation scripts.

Using Cloud Storage for Interfaces

Today there are many providers of cloud storage to choose from. Although Microsoft and Google paved the way early on, Box, Dropbox, and even iCloud exploded the market by offering a larger capacity, easier access, and faster synchronization of files.

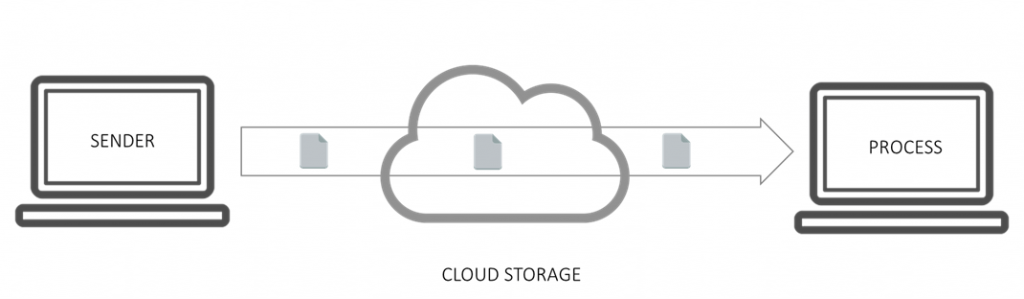

Similar to working with FTP, you can set up a computer with cloud storage client software that syncs files across. Using scripting, you could set up a system very similar to FTP, with the added flexibility that the files are already local and there is no need to use commands to upload and download them. You can use simple commands, like Copy, on the computer that’s processing the file.

As the diagram shows, cloud storage is quite similar to FTP, in that both pass through an intermediary: the cloud provider.

The biggest issue in adopting such a system is the need to have the sender and processor agree on which cloud provider since they might each have their own IT policies and standards to follow. Some companies go so far as to ensure that their files are stored in specific regions, which of course they can’t control when selecting a cloud storage provider. But they do have more options than using an FTP server.

One thing to watch out for is the file size limits with your cloud storage provider. Some do not allow an individual file to go over a certain size and all will limit your total storage to your subscription limits. Of course, one way to circumvent this is to move the files to local storage once they have been processed and use the cloud storage only for temporary transit storage.

Real-Time File Transfer

Some cloud storage solutions offer an API that can send a signal when a new file is created or updated. It can be used to signal the application that processes the files to immediately process the modified or created file. Comparatively, if you’re using FTP, the processor has to continuously monitor whether there are new files placed in the inbox of the FTP server at regularly scheduled times.

Keep in mind that implementing this “callback” or “hook,” is not for the faint of heart. It does require some serious technical skills.

Summary

Both FTP and cloud storage to transfer files between disparate systems and locations, companies, and vendors is an acceptable practice.

Differences between FTP and Cloud Storage

Implementation

FTP: Technical expertise and configuration are required, especially to ensure secured transfers using SFTP.

Cloud: Easier implementation since simple copy and move commands in Windows/Unix systems are easier to script.

Maintenance

FTP: If you are maintaining your own FTP server then this requires high maintenance and ensuring that the FTP server is safe from intrusion and monitoring logs and performance. Even if you host an FTP server with an infrastructure provider, there is a high degree of maintenance involved.

Cloud: Needless to say that there is no maintenance of the provider’s cloud software, servers, or service. It is maintained by the provider. However, you still need to maintain the hardware responsible for processing the files just like in FTP.

Speed of Transfer

FTP: Performance is relative to where the server is located, and the type and size of files. In general, FTP is quite rapid.

Cloud: Cloud synch has come a long way due to the fact cloud providers have servers and high-speed connections across the globe, and that they were designed for large files.

Error Handling / Processing

FTP: Although error correction and checksums are built into the protocol, network failures are typically hard to recover from if the sender protocol does not support certain FTP features and eventually can generate corrupt files.

Cloud: Cloud synching ensures that if the network is interrupted, the file will resume and complete normally.

Adoption

FTP: FTP is highly adopted by most data vendors and large companies as it provides a nice buffer/placeholder of files between two companies.

Cloud: The fact that there are so many Cloud providers ensures that companies can agree on the right vendor for internal policy and standard reasons or sometimes simply the cost.

Need to visualize your data from FTP or Cloud Storage applications?

ClicData is a Business Intelligence platform that allows you to connect and manage data from over 250 data sources, including FTP and Cloud Storage applications. Learn more about our platform and connectors.